This is a continuation of the blog post 12 Principles of Good Software Design.

6. An idiom should help isolate functionality into well defined components.

Object-oriented software development is a fantastic way to build applications, especially user interface applications designed to run on a mobile device. You can isolate functionality into well defined views, attach them to well-defined models where the internal representation is hidden behind a well-defined interface, and coordinate their behavior with well defined view controllers and the like. Events can be handled in a variety of well-defined ways, with singletons processing global messages and dispatching them to registered notifiers, or passed up through a well defined command chain. And each object, built with a well-defined interface, handling one thing, and being capable of being modified without causing the entire application to fall like a house of cards.

It’s a fantastic dream, one we routinely violate every. single. day.

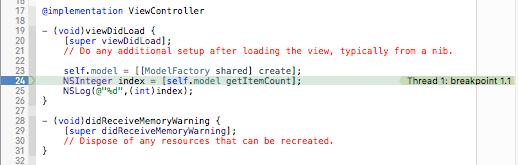

Here’s a common way a lot of table views are assembled in iOS. Suppose we have a list of data represented as a collection of NSDictionary objects stored in an NSArray, which is to be displayed in a table. So we build the following code to populate the table cells:

- (NSInteger)tableView:(UITableView *)tableView numberOfRowsInSection:(NSInteger)section

{

return self.data.count;

}

- (UITableViewCell *)tableView:(UITableView *)tableView cellForRowAtIndexPath:(NSIndexPath *)indexPath

{

UITableViewCell *cell = [tableView dequeueReusableCellWithIdentifier:@"cell" forIndexPath:indexPath];

NSDictionary *d = self.data[indexPath.row];

NSString *title = d[@"title"];

NSString *addr = [NSString stringWithFormat:@"%@, %@",d[@"city"],d[@"state"]];

NSString *subtitle = addr;

cell.textLabel.text = title;

cell.detailTextLabel.text = subtitle;

return cell;

}

Now it happens that having our view controller code parse the contents of the array and calculating how the contents are to be presented works okay in the simple case. But now suppose we need to extend the functionality of this table by displaying an image associated with each record. The image itself is a file name, which is pulled from a known server location.

So we add code to download the image to our view controller, which caches images in an NSCache object in the view controller, and uses a NSURLSession object, also initialized by the view controller:

- (void)downloadImage:(NSString *)path withCallback:(void (^)(UIImage *image))callback

{

UIImage *image = [self.cache valueForKey:path];

if (image) {

callback(image);

return;

}

void (^copyCallback)(UIImage *image) = [callback copy];

NSString *urlPath = [NSString stringWithFormat:@"http://myserver.com/image/%@",path];

NSURL *url = [NSURL URLWithString:urlPath];

NSMutableURLRequest *urlRequest = [[NSMutableURLRequest alloc] initWithURL:url cachePolicy:NSURLRequestReloadIgnoringLocalCacheData timeoutInterval:10];

[self.session dataTaskWithRequest:urlRequest completionHandler:^(NSData *data, NSURLResponse *response, NSError * error) {

// We should check for errors here.

UIImage *img = [UIImage imageWithData:data];

dispatch_async(dispatch_get_main_queue(), ^{

[self.cache setValue:img forKey:path];

copyCallback(img);

});

}];

}

And we add our image to the code which loads the image cells:

- (UITableViewCell *)tableView:(UITableView *)tableView cellForRowAtIndexPath:(NSIndexPath *)indexPath

{

UITableViewCell *cell = [tableView dequeueReusableCellWithIdentifier:@"cell" forIndexPath:indexPath];

NSDictionary *d = self.data[indexPath.row];

NSString *title = d[@"title"];

NSString *addr = [NSString stringWithFormat:@"%@, %@",d[@"city"],d[@"state"]];

NSString *subtitle = addr;

cell.textLabel.text = title;

cell.detailTextLabel.text = subtitle;

// Load the image

cell.imageView.image = [UIImage imageNamed:@"missing"];

[self downloadImage:d[@"image"] withCallback:^(UIImage *image) {

cell.imageView.image = image;

}];

return cell;

}

But, as it turns out, this has a bug; cells in our table view are reused, and it is possible if we are scrolling fast enough that an image is dynamically loaded from our cache for a particular table cell after the cell has been repurposed for another use.

So we attach a property to the table cell so we know which image the cell should be displaying, and we verify after the image is loaded the image path hasn’t changed:

- (UITableViewCell *)tableView:(UITableView *)tableView cellForRowAtIndexPath:(NSIndexPath *)indexPath

{

UITableViewCell *cell = [tableView dequeueReusableCellWithIdentifier:@"cell" forIndexPath:indexPath];

NSDictionary *d = self.data[indexPath.row];

NSString *title = d[@"title"];

NSString *addr = [NSString stringWithFormat:@"%@, %@",d[@"city"],d[@"state"]];

NSString *subtitle = addr;

cell.textLabel.text = title;

cell.detailTextLabel.text = subtitle;

// Load the image

NSString *path = d[@"image"];

objc_setAssociatedObject(cell,ImageNameKey,path,OBJC_ASSOCIATION_COPY);

cell.imageView.image = [UIImage imageNamed:@"missing"];

[self downloadImage:d[@"image"] withCallback:^(UIImage *image) {

NSString *curPath = objc_getAssociatedObject(cell, ImageNameKey);

if ([curPath isEqualToString:path]) {

cell.imageView.image = image;

}

}];

return cell;

}

Notice that this sort of code is not atypical of a lot of iOS applications. The model is little more than a chunk of data, the view is little more than a dumb holder, and the view controller winds up doing everything: network calls, caching, formatting table cells, validating images are correctly loaded.

Worse, suppose now we had to alter the functionality of our table view to add other fields, to customize the fields, and to alter the height of the different table views depending on the size of the content being provided?

The flaw here is that, due to a misapprehension as to the roles of models, views and view controllers, we have violated the essence of partitioning our code into well-defined components, where each component does one thing and does one thing well, without significantly overloading a single object with too much functionality.

The key here is that in the original Model-View-Controller papers, there are no requirements on the complexity of the views or the models, nor are there any requirements on having to roll in every single bit of functionality into a single monolithic controller. (Feel free to browse the papers linked here; I’ll wait.)

In fact, the perfect example of a view which contains complex functionality is the UITextField object; it is responsible for taking in text, presenting a keyboard, editing the text, interacting with the clipboard, and handling bidirectional text when internationalized. Hardly a “light weight” object–though we think of it as such, because it has well-defined functionality and a simple interface.

So what is our current View Controller doing, exactly, beyond coordinating messages between the views and the model?

- Loading images from a remote server.

- Formatting the data displayed in a table view.

- Dynamically loading images to the table view cells.

In fact, one could argue that we have no model–just an array of data objects, and so our view controller also contains some of the business logic that we should be putting in the model.

So let’s reorganize our code to refactor everything into their own well-defined components.

First, the image loading mechanism that we created above properly belongs in its own class. That’s because it is quite possible we would be reusing the code elsewhere as well. Further, if we find bugs or if we decide to migrate to a third party library, we only have one interface to change, rather than countless view controllers, each of which were responsible for loading images.

This gives us the following ImageLoader class:

#import <UIKit/UIKit.h>

@interface ImageLoader : NSObject

+ (ImageLoader *)shared;

- (void)downloadImage:(NSString *)path

withCallback:(void (^)(NSString *path, UIImage *image))callback;

@end

and the following implementation:

#import "ImageLoader.h"

@interface ImageLoader()

@property (strong, nonatomic) NSURLSession *session;

@property (strong, nonatomic) NSCache *cache;

@end

@implementation ImageLoader

- (id)init

{

if (nil != (self = [super init])) {

self.cache = [[NSCache alloc] init];

NSURLSessionConfiguration *config = [NSURLSessionConfiguration ephemeralSessionConfiguration];

self.session = [NSURLSession sessionWithConfiguration:config];

}

return self;

}

+ (ImageLoader *)shared

{

static ImageLoader *singleton;

static dispatch_once_t onceToken;

dispatch_once(&onceToken, ^{

singleton = [[ImageLoader alloc] init];

});

return singleton;

}

- (void)downloadImage:(NSString *)path

withCallback:(void (^)(NSString *path, UIImage *image))callback

{

UIImage *image = [self.cache valueForKey:path];

if (image) {

callback(path,image);

return;

}

void (^copyCallback)(NSString *path, UIImage *image) = [callback copy];

NSString *urlPath = [NSString stringWithFormat:@"http://myserver.com/image/%@",path];

NSURL *url = [NSURL URLWithString:urlPath];

NSMutableURLRequest *urlRequest = [[NSMutableURLRequest alloc] initWithURL:url cachePolicy:NSURLRequestReloadIgnoringLocalCacheData timeoutInterval:10];

[self.session dataTaskWithRequest:urlRequest completionHandler:^(NSData *data, NSURLResponse *response, NSError * error) {

// We should check for errors here.

UIImage *img = [UIImage imageWithData:data];

dispatch_async(dispatch_get_main_queue(), ^{

[self.cache setValue:img forKey:path];

copyCallback(path,img);

});

}];

}

@end

Notice the advantage of this class: it does exactly one thing and one thing only. It loads images from our server. That’s it.

And because it only does one thing and does it simply, it is easy for us to incorporate into our code, and it is easy for us to fix problems if we discover our image loading process seems broken somehow.

The second thing we can remove from the View Controller is the functionality of parsing and loading the contents of the Table Cell. This refactoring is important in that it makes the view object (in this case, our UITableViewCell object) responsible for its presentation, rather than placing responsibility for presentation inside the view controller.

By doing this, we make the Table Cell responsible for its own presentation. This refactoring of code so that a Table Cell handles itself will be instrumental in the third step we plan to perform–and can’t be done without this second step.

(Yes, I know this is a point of contention amongst programmers who believe views should be dumb and views that are smart. The point of contention is the belief that views should not talk to models or know anything about models. However, this notion of a Model-View-Presenter pattern is not “Model-View-Controller”; views in our paradigm do not need to be completely passive. I would argue that Model-View-Presenter is appropriate only if you are architecturally constrained by a UI which does not allow you to build a more intelligent views that are able to participate in their own lifecycle or constructed to understand the context in which they are used.)

So refactoring our table view cell into it’s own class, we get a new class TableViewCell:

#import <UIKit/UIKit.h>

@interface TableViewCell : UITableViewCell

- (void)setData:(NSDictionary *)data;

@end

Notice the simplicity of the interface; as the view cell is responsible for parsing and understanding the contents at a given row, we only need to pass the row into the cell.

The implementation is also straight forward:

#import "TableViewCell.h"

#import "ImageLoader.h"

@interface TableViewCell ()

@property (copy, nonatomic) NSString *pathImage;

@end

@implementation TableViewCell

- (void)setData:(NSDictionary *)d

{

NSString *title = d[@"title"];

NSString *addr = [NSString stringWithFormat:@"%@, %@",d[@"city"],d[@"state"]];

NSString *subtitle = addr;

self.textLabel.text = title;

self.detailTextLabel.text = subtitle;

// Load the image

NSString *loadPath = d[@"image"];

self.pathImage = loadPath;

self.imageView.image = [UIImage imageNamed:@"missing"];

[[ImageLoader shared] downloadImage:loadPath withCallback:^(NSString *path, UIImage *image) {

if ([self.pathImage isEqualToString:path]) {

self.imageView.image = image;

}

}];

}

@end

Notice the advantage of this class: it does exactly one thing and one thing only. It loads the table view cell with the contents of our data. That’s it.

And because it only does one thing and does it simply, it is easy for us to incorporate into other table views, and it is easy for us to fix problems if we discover problems with how we’re parsing the data for presentation.

Also notice a side effect: the table view cell has become responsible for loading the image that is being displayed in itself. Our view controller now reduces to something far simpler to maintain:

- (NSInteger)tableView:(UITableView *)tableView numberOfRowsInSection:(NSInteger)section

{

return self.data.count;

}

- (UITableViewCell *)tableView:(UITableView *)tableView cellForRowAtIndexPath:(NSIndexPath *)indexPath

{

TableViewCell *cell = [tableView dequeueReusableCellWithIdentifier:@"cell" forIndexPath:indexPath];

[cell setData:self.data[indexPath.row]];

return cell;

}

Our view controller is no longer responsible for loading the image, so it no longer has to maintain a cache or even understand that some images are being loaded in the background.

We can even take this one step further, and rather than have our table view cell be responsible for handling loading of the image, we could create a separate image class which handles loading the image itself.

So here’s a simple implementation of our image loading class, which extends the UIImageView class:

#import <UIKit/UIKit.h>

@interface ImageView : UIImageView

- (void)imageFromPath:(NSString *)path;

@end

and our implementation:

#import "ImageView.h"

#import "ImageLoader.h"

@interface ImageView ()

@property (copy) NSString *path;

@end

@implementation ImageView

- (void)imageFromPath:(NSString *)loadPath

{

self.image = [UIImage imageNamed:@"missing"];

self.path = loadPath;

[[ImageLoader shared] downloadImage:loadPath withCallback:^(NSString *path, UIImage *image) {

if ([self.path isEqualToString:path]) {

self.image = image;

}

}];

}

@end

Notice the advantage of this class: it does exactly one thing and one thing only. It load the image and displays it given the path of the image to display. That’s it.

And because it only does one thing and does it simply, it is easy for us to use throughout our application. Further, because it is only one class, if we decide to incorporate some more fancy features (such as a loading animation which fades the downloaded image from a black screen), we could add that by updating the code only in one place–and see the functionality throughout or application.

We inherit from the UIImageView class so we get all of the benefits of that class as well. This allows us to greatly simplify our table view cell’s initialization code (after we’ve changed the image view to our custom implementation):

- (void)setData:(NSDictionary *)d

{

NSString *title = d[@"title"];

NSString *addr = [NSString stringWithFormat:@"%@, %@",d[@"city"],d[@"state"]];

NSString *subtitle = addr;

self.textLabel.text = title;

self.detailTextLabel.text = subtitle;

[self.imageView imageFromPath:d[@"image"]];

}

@end

Of course sometimes you can take this to the point of absurdity. But the advantage of constructing our application using reusable components is that we can reuse them–and reuse depends on us isolating functionality into well-defined components, and having each component do its one thing well.

Besides, our applications never become simpler; product managers and business managers and program managers almost never ask us to remove features or to remove the presentation of things on our applications. They are forever pushing new functionality and new features onto our applications.

I guarantee you if you start with a very simple image loader class, eventually new requirements will expand the size of that class. Table view cells eventually contain more information, or contain tappable regions which expand to present more information. Image view loaders become more slick, with dynamic animating components and wait cursors and broken image displays.

So if your table view cell implementation starts at 10 lines, it won’t stay 10 lines for long–even if you keep to the mantra of keeping your components behavior simple and well defined, doing only one thing and doing that one thing well.

It is worth noting there is an anti-pattern to this idiom, which is rather common in the field and should be avoided at all costs.

Let’s take our current example. Suppose we have a view controller and our table cell which handles presenting the contents of a row to a view table. Suppose, however, we haven’t added loading images into our table cell yet. So our TableViewCell class’s setData function looks like the following:

- (void)setData:(NSDictionary *)d

{

NSString *title = d[@"title"];

NSString *addr = [NSString stringWithFormat:@"%@, %@",d[@"city"],d[@"state"]];

NSString *subtitle = addr;

self.textLabel.text = title;

self.detailTextLabel.text = subtitle;

}

@end

And our View Controller’s table delegates look like the following:

- (NSInteger)tableView:(UITableView *)tableView numberOfRowsInSection:(NSInteger)section

{

return self.data.count;

}

- (UITableViewCell *)tableView:(UITableView *)tableView cellForRowAtIndexPath:(NSIndexPath *)indexPath

{

TableViewCell *cell = [tableView dequeueReusableCellWithIdentifier:@"cell" forIndexPath:indexPath];

[cell setData:self.data[indexPath.row]];

return cell;

}

Now we’ve been assigned to load images from a path location into our table cells. Rather than allow the table cell code to do what it does best (manage the presentation of the data in the table cell), instead, we add the code to handle loading the image in the background into our view controller:

- (NSInteger)tableView:(UITableView *)tableView numberOfRowsInSection:(NSInteger)section

{

return self.data.count;

}

- (UITableViewCell *)tableView:(UITableView *)tableView cellForRowAtIndexPath:(NSIndexPath *)indexPath

{

TableViewCell *cell = [tableView dequeueReusableCellWithIdentifier:@"cell" forIndexPath:indexPath];

NSDictionary *d = self.data[indexPath.row];

[cell setData:d];

// Load the image

NSString *loadPath = d[@"image"];

objc_setAssociatedObject(cell,ImageNameKey,loadPath,OBJC_ASSOCIATION_COPY);

cell.imageView.image = [UIImage imageNamed:@"missing"];

[[ImageLoader shared] downloadImage:loadPath withCallback:^(NSString *path, UIImage *image) {

NSString *curPath = objc_getAssociatedObject(cell, ImageNameKey);

if ([curPath isEqualToString:path]) {

cell.imageView.image = image;

}

}];

return cell;

}

Now look at the mess we’ve made.

We’ve violated the contract of the TableViewCell class by taking part of its responsibility for presentation, and moved it into the view controller.

“So what?” you may say.

Well, the problems with this anti-pattern are:

First, because you have violated the contract of allowing the table cell view to handle presentation of the data object, you now have made it more difficult to manage that class. Changes in the data model are now scattered between the table cell class and various view controllers which use that class.

Because of this, second, bug are harder to fix, as the responsibility for presentation is not only scattered across several possible view controllers, but it is also split between view controllers and an incomplete table view cell. This means a change to the software will require spelunking through the source code, rather than going to the one location where the functionality is handled.

Life is too short and too complicated as it is to set ourselves up for failure.